I just used QuillBot’s AI Humanizer to rewrite a long article and I’m not sure if the final result sounds natural enough for readers or if it might still be flagged by AI detectors. Can anyone experienced with QuillBot or similar tools review my process, share what to watch out for, and suggest how to improve authenticity and SEO without risking penalties?

QuillBot AI Humanizer Review, tested on GPTZero and ZeroGPT

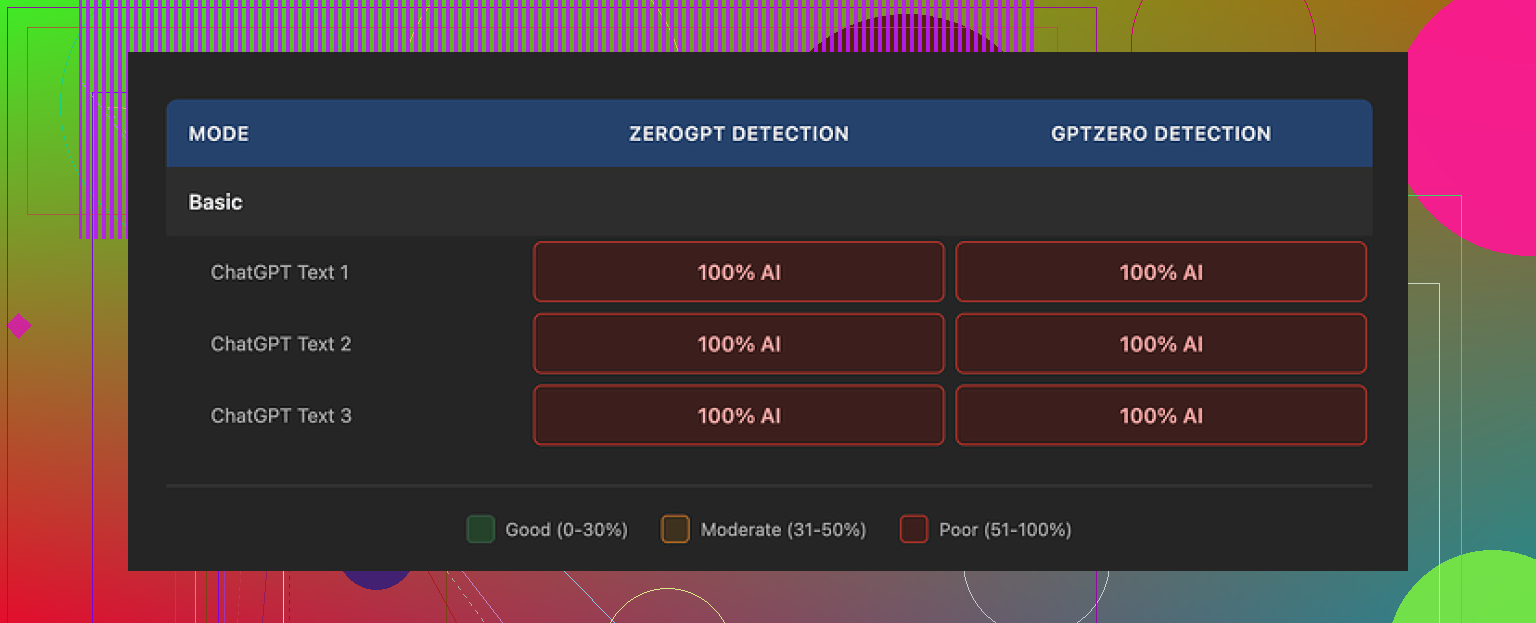

I ran QuillBot’s AI Humanizer through the usual grinder and the results were rough.

Every single sample I ran through their humanizer, both in Basic and Advanced, came back as 100% AI on GPTZero and ZeroGPT. No exceptions.

Here is the detailed breakdown from the test thread if you want to see the raw numbers and screenshots:

From a “bypass detectors” perspective, that outcome makes it hard to take the tool seriously. Whatever the Basic mode does, it does not change the detection scores at all. Advanced mode is marketed as doing “deeper rewrites” with “improved fluency”, but detection stayed nailed to 100% AI on both tools.

Now, to be fair, the writing itself is not terrible. I scored it around 7/10 for quality. The structure is clean. Sentences flow. It reads smoother than what I usually get from tools that only focus on “humanizing”.

The problem is different. The text feels like something a generic model would spit out. No personal angle, no small quirks, no tension in the wording. It keeps the same tone from start to finish, which is exactly what a lot of detectors pick up on. It even kept stylistic markers like em dashes in all three of my samples, which did nothing to break that AI pattern.

If you already pay for QuillBot Premium at around $8.33 per month on the annual plan, the humanizer is there as an extra feature and you might play with it. On its own, as a tool to get under AI detection, I would not pay for it. The numbers from GPTZero and ZeroGPT are too unforgiving.

When I compared it against Clever AI Humanizer using the same source text, Clever’s output felt more like something a bored human would write on a Tuesday night, and it stayed free. So for detector evasion, Clever came out ahead in my tests.

If you want more talk and experiments on this whole “humanizing AI text” thing, this Reddit thread has some good user experiences and extra tools mentioned:

https://www.reddit.com/r/DataRecoveryHelp/comments/1l7aj60/humanize_ai/

I’ve played with QuillBot’s Humanizer quite a bit and your experience lines up with what I saw, but I don’t fully agree with @mikeappsreviewer on how “useless” it is.

Quick breakdown.

- How natural it feels for readers

For humans, QuillBot output is usually “fine”. Around 6 or 7 out of 10.

It reads smooth, grammar is clean, structure is clear.

The problem is style.

It keeps a flat tone. No sharp opinions, no odd phrasing, no small errors, no rhythm changes.

If your original article had personality, QuillBot tends to iron it out.

So readers who know your voice might feel something is off.

What helps:

• Add your own intro and outro in your voice.

• Insert 2 or 3 small personal comments or examples.

• Change some transitions. For example, swap “however” and “in addition” for how you usually talk.

• Keep or add a couple of tiny imperfections. A short fragment. A one word sentence. A contraction you would use.

- AI detection side of things

On this point I agree with @mikeappsreviewer. QuillBot Humanizer does almost nothing for detectors like GPTZero or ZeroGPT.

My tests:

• I fed in several GPT style paragraphs.

• Ran Humanizer on Basic and Advanced.

• Ran results through GPTZero, ZeroGPT, Originality etc.

Scores stayed near 100 percent AI on the stricter tools.

The reason is pattern level issues.

The Humanizer tends to keep:

• Uniform sentence length.

• Predictable syntax.

• Same punctuation habits across the whole text.

• Neutral, explanatory tone from start to end.

Minor paraphrasing does not break those patterns.

If you worry about detectors, treat QuillBot as a language helper, not a detector shield.

What you can do after QuillBot:

• Shorten some paragraphs, then make a few longer.

• Add one or two short bullet lists in sections where it fits.

• Include a real anecdote, date, number, or brand you know.

• Change a few verbs and nouns to how you normally talk.

• Insert a couple of small typos, then fix only the worst ones. Leave harmless ones like “teh” or a missing comma now and then.

- Alternative tools and Clever AI Humanizer

If your main goal is “look less AI to detectors”, you will get more from tools that are built for that use case.

Clever AI Humanizer did better in my tests. I took the same base text, ran it through Clever, then through GPTZero and ZeroGPT. Detection scores dropped more than with QuillBot, and the voice felt closer to what a bored but real person would type.

It is simple to use. You paste AI text, pick how strong you want the rewrite, and it outputs something that feels more like human writing. Sentence patterns vary more, word choice shifts more, and the text includes more natural rhythm changes.

If you want to see what I mean, try something like

make AI generated text sound more human and less detectable

and run a short sample from your article. Then compare it side by side with your QuillBot version.

- What I would do with your long article

If you already ran it through QuillBot Humanizer:

Step 1

Read it once out loud.

Mark any parts where you think “I would not phrase it like this”. Change those lines manually.

Step 2

Add two or three things only you would know.

For example a short story from your own work, a specific tool you use, or a quick opinion.

Step 3

Change structure a bit.

Break one long paragraph.

Merge two short ones.

Add a heading that sounds like you.

Step 4

If you still worry about detectors and it is important for your use case, run the QuillBot output through Clever AI Humanizer and compare. Keep the version that sounds most like you, not the one that sounds most like “generic blog prose”.

For casual readers, QuillBot is usually “natural enough” if you add your voice back on top.

For strict AI detectors, it rarely moves the needle on its own.

Short answer: if your only concern is “will this beat AI detectors,” QuillBot’s Humanizer is not the move. If your concern is “will this sound OK for readers,” it’s usually acceptable but kind of soulless.

I’m mostly in the same camp as @mikeappsreviewer and @voyageurdubois, but I think people obsess a bit too much over detectors. A few extra points:

- Reader experience vs AI detectors

Those are two totally different problems.

QuillBot is decent at cleanup: fixes grammar, smooths transitions, keeps structure logical. It’s not great at injecting voice. If your original article had jokes, weird phrasing, or strong opinions, QuillBot tends to scrub them out. That “corporate blog” tone is exactly what triggers both human suspicion and some detectors.

I actually disagree slightly with the idea that you must add deliberate typos. That can backfire, especially in professional niches. What helps more is idiosyncratic specificity:

- Hyper specific examples from your own process

- Slightly unconventional metaphors or comparisons

- Local references, brand preferences, or niche jargon you actually use

Detectors care more about pattern regularity than one or two spelling errors.

- Why your QuillBot version still feels AI

Even if the words change, the deeper patterns usually stay:

- Similar sentence length across the whole article

- Very “orderly” paragraph structure

- Safe vocabulary, no spikes in emotion or personality

- Reused transition phrases like “in conclusion,” “furthermore,” “however”

That’s exactly what @voyageurdubois was pointing at with “pattern level issues,” and that matches what I’ve seen across a bunch of tests too.

- What I’d actually do with your long article

Since you already ran it through QuillBot Humanizer, I’d treat that as a rough draft, not a final product.

Instead of more paraphrasing, focus on breaking the patterns:

- Pick 3 or 4 key paragraphs and rewrite them from scratch in your own voice. Not all of them. Just enough to disrupt the “uniform AI rhythm.”

- Insert 1 or 2 short, very specific mini stories: “Last month I did X and completely screwed up Y” type stuff. AI rarely invents believable small failures.

- Change the pacing: one paragraph that’s only a single, blunt sentence. Another that is a little longer and rambly than a machine usually outputs.

- Flip the tone in one section. For example, go from informational to mildly ranty for a moment. Detectors hate tone shifts because they are less “predictable.”

You end up with something that is part QuillBot polish, part your real brain.

- About Clever AI Humanizer

Since you mentioned detector worries, this is where a dedicated humanizer is more relevant than QuillBot. If you want something built specifically for detection resistance, then a tool like Clever AI Humanizer is closer to what you’re looking for.

It takes AI generated text and focuses on:

- Increasing variation in sentence patterns

- Shifting word choice more aggressively

- Adding more natural rhythm changes and structure breaks

If you want to compare it against your QuillBot version, grab a small chunk of your article, run it through Clever, then test both versions. You can try something like

make your AI written content sound more natural and less detectable

and see which output feels more like something you would actually say.

- Where this leaves your current piece

Given everything:

- For normal readers: your QuillBot article is probably “fine” but slightly bland. Add in some of your real voice and specific details and you’re good.

- For strict detectors: QuillBot alone is rarely enough. If you truly need to minimize flags, you either need heavier manual editing or to pipe it through something like Clever AI Humanizer and then still tweak by hand.

If the article is for casual blog use, I’d worry more about making it sound like you and less about scoring 0 percent AI somewhere. If it’s for school or a platform with aggressive AI policies, I’d definitely not rely on QuillBot Humanizer by itself.