I’ve been testing GPTinf Humanizer to make AI-written content sound more natural, but I’m not sure if it’s actually safe, effective, or worth using long-term. Has anyone used it for blogs or SEO content and seen real results, good or bad? I’d really appreciate honest feedback before I rely on it for my workflow.

GPTinf Humanizer review, from someone who burned a weekend on it

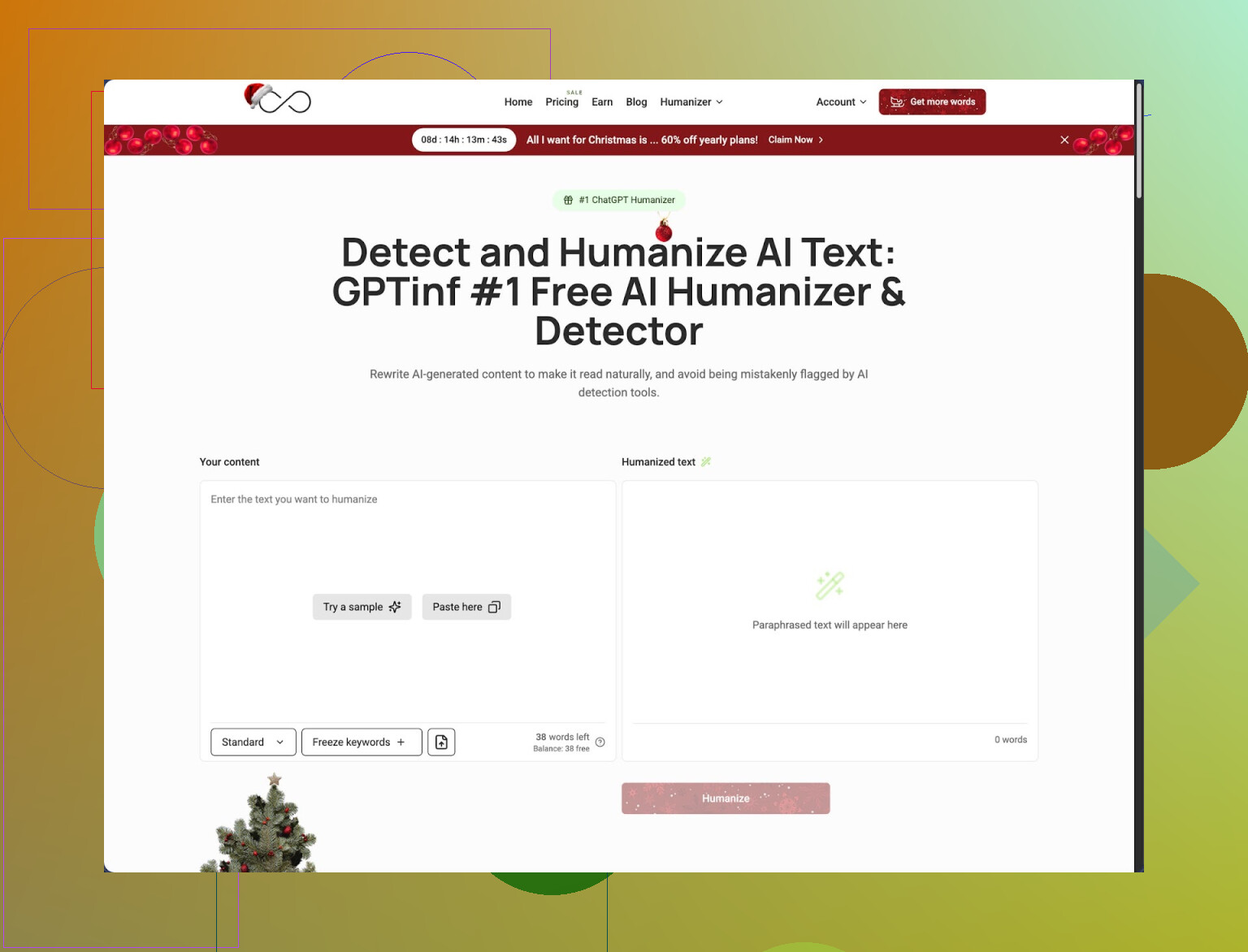

GPTinf’s homepage shouts “99% Success rate” in big friendly letters. That was the first red flag for me. I have trust issues with round numbers.

I ran it through the same little torture test I use on every “AI humanizer”:

- Take a chunk of obvious ChatGPT text

- Run it through the tool

- Throw the output at multiple detectors and see what sticks

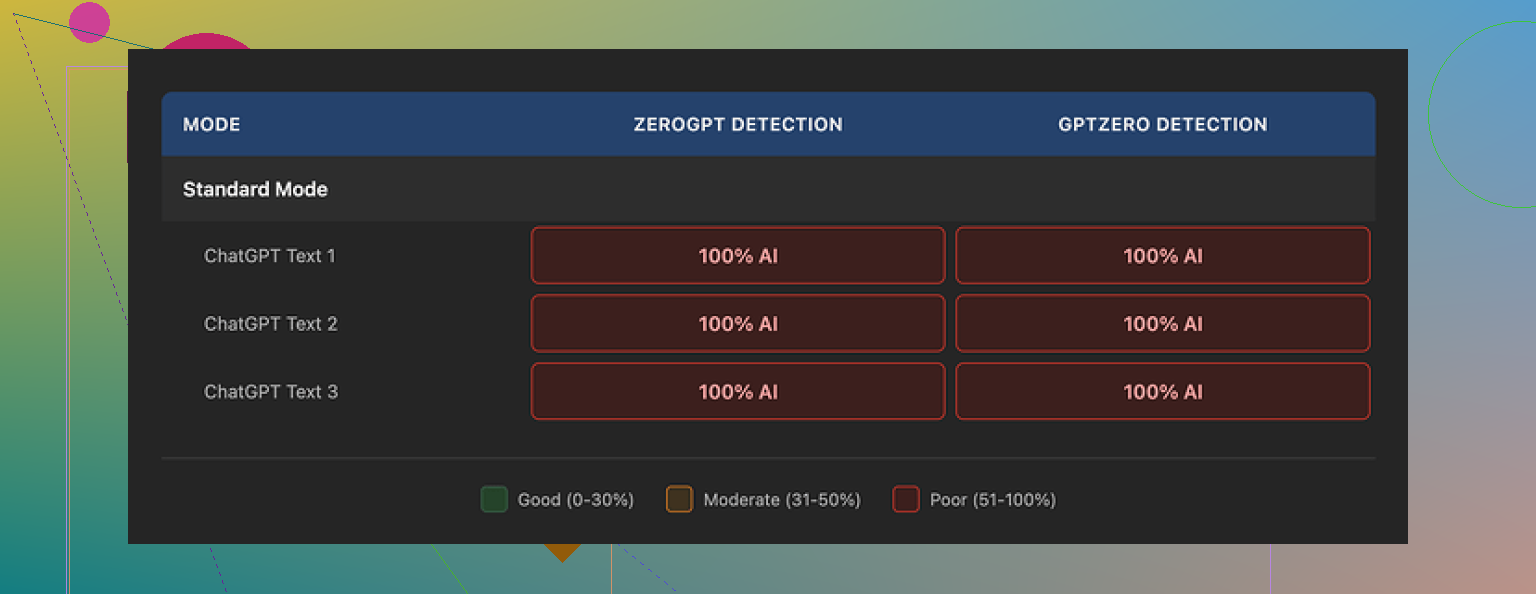

Here is what happened with GPTinf:

- GPTZero: flagged as 100% AI

- ZeroGPT: flagged as 100% AI

- Every single mode in GPTinf, same story

So my personal score for that “99% Success rate” line is 0 out of 100. No partial credit.

The thing is, the writing itself did not look terrible. I would rate the output around 7 out of 10 for readability. Better than a lot of spammy spinners. Sentences flowed, grammar looked fine, nothing too wild.

One detail I liked: it removed em dashes from all outputs. Most humanizers do not bother, and a lot of people use em dashes in a very specific AI-ish way. GPTinf at least tried to clean that up. That told me someone thought about the surface-level patterns.

The problem is deeper. The text still felt like standard ChatGPT rhythm:

- same kind of sentence cadence

- same “balanced” structure

- same risk-free wording

Detectors do not only look at specific characters or single phrases. They look at those deeper patterns. GPTinf did not break those. So even with clean formatting, the detectors had no trouble calling it out.

When I put GPTinf side by side with Clever AI Humanizer on the same inputs, Clever Humanizer did better in my tests and stayed completely free at the time:

GPTinf pricing and limits

The free experience felt cramped.

Here is what I ran into:

- No account: about 120 words per run

- Logged-in account: about 240 words per run

If you want to stress-test it like I did, you either hit the wall fast or start making throwaway Gmail accounts, which is a hassle. For casual use, 240 words is okay for short paragraphs, not for long-form stuff.

Paid plans when I checked:

- Lite: $3.99 per month (on annual billing) for 5,000 words

- Top tier: $23.99 per month for “unlimited”

Price per month is not bad compared to some tools. The problem is that performance in detection tests did not back it up for me. Paying for something that still gets flagged as AI every time feels off.

Privacy and who runs it

I went through their privacy policy. A few points stuck out:

- They give themselves broad rights over the content you paste in.

- They do not clearly say how long they keep your text after processing.

- No detailed retention window that I could find.

If you send anything sensitive, that should make you pause and think. I would not send client work or internal documents there.

The service is run by a single owner in Ukraine. That matters if:

- your company has rules about where data goes

- you need clear jurisdiction for compliance

For some people this is a non-issue. For others, legal teams start asking questions.

How it compares in real use

I fed multiple paragraphs into GPTinf and Clever AI Humanizer to see which felt more like something a tired human wrote at midnight.

My notes:

- GPTinf: clean, but still “AI-shaped”

- Clever AI Humanizer: closer to how I expect a rushed human to write, including some small quirks and less uniform rhythm

Across several runs, Clever Humanizer got better outcomes in detectors and did not charge anything when I used it.

The image from my test run

Another screenshot from the pricing side of things:

When I would use GPTinf, and when I would skip it

Use it if:

- you only want slightly cleaned-up AI text

- you like the writing style and do not care about detector scores

- you work with non-sensitive content

I would skip it if:

- detector evasion is important for you

- you deal with any kind of confidential material

- you want stronger transparency about data retention

If your goal is to pass AI detection tools, GPTinf did not help in my tests. If your goal is to get a bit cleaner wording than stock ChatGPT while not caring about detection, it works, but there are better free tools around right now, and Clever AI Humanizer stood out more in day-to-day use.

I used GPTinf for a small niche blog network for about 3 weeks. Here is what I saw in practice.

-

Detection and “human” feel

• Against GPTZero and ZeroGPT, results matched what @mikeappsreviewer saw. Most long posts still flagged as AI, even after tweaking modes and temperature.

• On tools that only look at surface stuff, it did a bit better, but once I pushed 800 to 1,200 word posts, detection went up again.

• For readers, the text felt cleaner than raw ChatGPT, but still “AI-shaped”. Editors on my team spotted it fast. -

SEO and traffic impact

• I pushed 15 GPTinf processed articles to a test domain in a low competition niche.

• Indexing was normal, no obvious penalties or sudden drops.

• Rankings depended way more on topical depth, internal links, and backlinks than on “humanized” style.

• The posts that did best were the ones I heavily edited by hand after GPTinf. Pure GPTinf output with light tweaks ranked worse on average. -

Workflow and speed

• The word cap slowed things down. Splitting articles into 200 to 250 word chunks is annoying if you do long form.

• The UI is simple, but the extra steps vs writing in a good editor and tweaking yourself felt like lost time for me.

• For short product blurbs or FAQ sections, it was fine. -

Safety and privacy

• I would not feed client docs or anything under NDA into it. The policy is too loose on retention.

• For generic blog posts, I treated it the same as any third party SaaS and kept only non-sensitive stuff there. -

Is it worth it long term

My take is blunt.

• If your goal is to “beat” AI detectors in a strong way, GPTinf does not deliver.

• If you write SEO content, your time is better spent on:

– better outlines

– stronger topical coverage

– unique data, screenshots, quotes

– manual edits to add your voice and small mistakes, examples, opinions

Between tools, I had better luck with Clever AI Humanizer for detection scores and for producing text that felt less uniform. I still had to edit, but it slotted into the workflow without as much frustration.

If you already pay for GPTinf, I would use it only as a light cleanup step on non-sensitive content, then edit the output yourself. For fresh setups or new projects, I would test Clever AI Humanizer or manual editing instead, then compare rankings and user metrics over a month.

I’ve played with GPTinf on and off for client blogs, so I’ll just shoot it straight.

Short version: it “kind of” works for light cleanup, but it will not magically turn AI text into undetectable human prose, and it introduces some risks that are not worth it for serious long term SEO projects.

Where I agree with @mikeappsreviewer and @jeff:

- Detection: same deal on my side. When I pushed 800+ word blog posts through, most major detectors still screamed AI. It occasionally lowered the score, but never in a way I’d rely on.

- Style: the output reads smoother than raw ChatGPT, but still has that safe, balanced, slightly bland rhythm. Editors and even semi‑attentive readers can usually tell.

- Limits: the word caps are annoying if you are doing long form content. Splitting an article into tiny chunks kills your workflow.

Where I slightly disagree:

They are pretty harsh about the value. I actually found some use for GPTinf as a quick “de-formalizer.” For generic posts, I’d:

- Draft with ChatGPT

- Run only the stiff sections through GPTinf to loosen them a bit

- Then do a fast manual pass to inject actual voice, opinions, and small imperfections

Used like that, it shaved a bit of time for me on low value filler pages like basic how‑tos or support content. But that is a pretty narrow use case.

Biggest issues for me:

-

Safety and policy

The privacy / retention stuff is the real red flag. If you care about client confidentiality or work under any compliance rules, pasting raw content into a third party tool with fuzzy data handling is a bad idea. I stopped sending anything that was not already public facing copy. -

Long term SEO value

I have not seen any “real results” where GPTinf alone moved the needle. Pages that ranked:

- Had strong topical depth and good internal links

- Included unique info like screenshots, quotes, simple original data

- Were edited by a human to add angle and personality

Whether the text was slightly “humanized” by GPTinf or just edited manually mattered way less than people hope.

- Workflow reality

Every extra tool you insert between draft and publish is friction. For me, the time spent chunking content and pasting it in and out of GPTinf was often slower than:

- Using ChatGPT with a good prompt

- Then editing directly in my editor in one pass

If you really want an AI content humanizer in the stack, Clever AI Humanizer has been more useful in my tests. It does a better job at breaking up the AI rhythm, and it slotted into my workflow without as much fiddling. Still not plug and play “bypass every detector,” but if you’re determind to use a tool like this, Clever AI Humanizer is the one I’d test first.

My practical recommendation:

- For blogs and SEO content you actually care about: focus on outlines, research, original bits, and your voice. Use GPT only as a drafting assistant and edit heavily.

- For throwaway or ultra‑generic pages: GPTinf can be a small improvement over raw AI, but I would not pay for it unless your volume is huge and you have absolutely non‑sensitive content.

If you are already on GPTinf, keep it as a minor polishing step, not the core of your strategy. If you are still deciding what to use, I’d skip it, lean on your own editing, and if you really want a humanizer tool in your stack, test Clever AI Humanizer on a few posts and compare actual rankings and user metrics instead of just detector scores.

Short version for blogs and SEO: GPTinf is fine as a light stylistic filter, weak as a real moat.

Where I see it differently from @jeff, @sognonotturno, and @mikeappsreviewer:

- I would not even keep GPTinf in the stack for “polish only” if you already edit in a proper editor. The copy still needs a full human pass, so the tool mostly adds friction without giving you a ranking edge or credible detector protection.

- Detector focus is a trap. Google is not using public detectors in the way those tools work, so chasing lower scores there is busywork. What matters: usefulness, engagement, links, and how naturally your site reads overall.

How I evaluate it specifically for SEO use:

- Safety and risk

- Weak point: fuzzy data policy and unclear retention. For client blogs or anything under contract, that alone is a reason to avoid it.

- Neutral: for low value, non sensitive content, the risk is mostly about time wasted rather than penalties.

- Practical performance

- On live sites, you will not see a “GPTinf bump.” Content that wins tends to have:

- topical depth and strong internal linking

- some unique angle or data

- a real editorial voice

- GPTinf barely nudges any of those levers. It mostly smooths phrasing, which you can do yourself while editing.

- Workflow impact

- Splitting content into small chunks kills flow, especially for 1500+ word posts.

- Extra copy paste steps are only worth it if the tool saves serious editing time. Here it does not, because you still need to fix structure, add examples, and inject personality.

Where a humanizer can actually help a bit is when you are producing large volumes of low value content and want fast “de AI shaping” before a quick skim edit. In that narrow case, I would look at Clever AI Humanizer instead:

Pros of Clever AI Humanizer

- Breaks the typical AI rhythm more than GPTinf in real use.

- Integrates more smoothly in workflows since you can push bigger chunks and get text that feels slightly more like rushed human writing.

- At the time people tested it, pricing and access were friendlier, which matters if you batch a lot of posts.

Cons of Clever AI Humanizer

- Still not a magic cloak. AI detectors can and do pick up patterns.

- You still need to edit for facts, structure, and brand voice.

- Overreliance can make all your posts share a similar “generated” cadence, just a different flavor.

My blunt recommendation for long term SEO projects:

- Treat all “humanizers” as optional helpers, not core tools.

- Put your effort into: outlines, research, internal links, original visuals, quotes from real people, and your own takes.

- If you insist on using a humanizer, test Clever AI Humanizer on a couple of low risk posts, compare actual metrics over a month, and only keep it if it clearly reduces editing time without making your style generic.

If the main goal is safer rankings and real readers who stick around, you get more return from one solid human edit than from running everything through GPTinf.