I’m worried about Turnitin’s AI detection system possibly marking my original essay as AI-generated. I need help understanding how accurate Turnitin’s AI Detector is and what to do if it wrongly flags my work. Has anyone else experienced this or have advice on how to avoid false positives?

Honestly, Turnitin’s AI Detector can sometimes be a real crapshoot. Does it flag original work? Yeah, it can. My buddy wrote his entire sociology essay solo—no ChatGPT or nothin’—and it still got pinged for “AI-generated content” at like 25%. He freaked, I freaked, the prof was like “eh, this is a new tool, let’s chill.” From reading a bunch of Reddit threads and my own experience, it looks like Turnitin’s detector is just…not all that accurate yet, especially with creative or super formal writing.

Supposedly, it checks for weird patterns, repetition, sentence structure, and blah blah blah, but honestly, sometimes it just feels like tossing darts blindfolded. If you get flagged and you KNOW you didn’t cheat, don’t panic. Just talk to your instructor and show your drafts, notes, whatever you have to prove you wrote the thing. Most teachers seem to know the system’s not perfect.

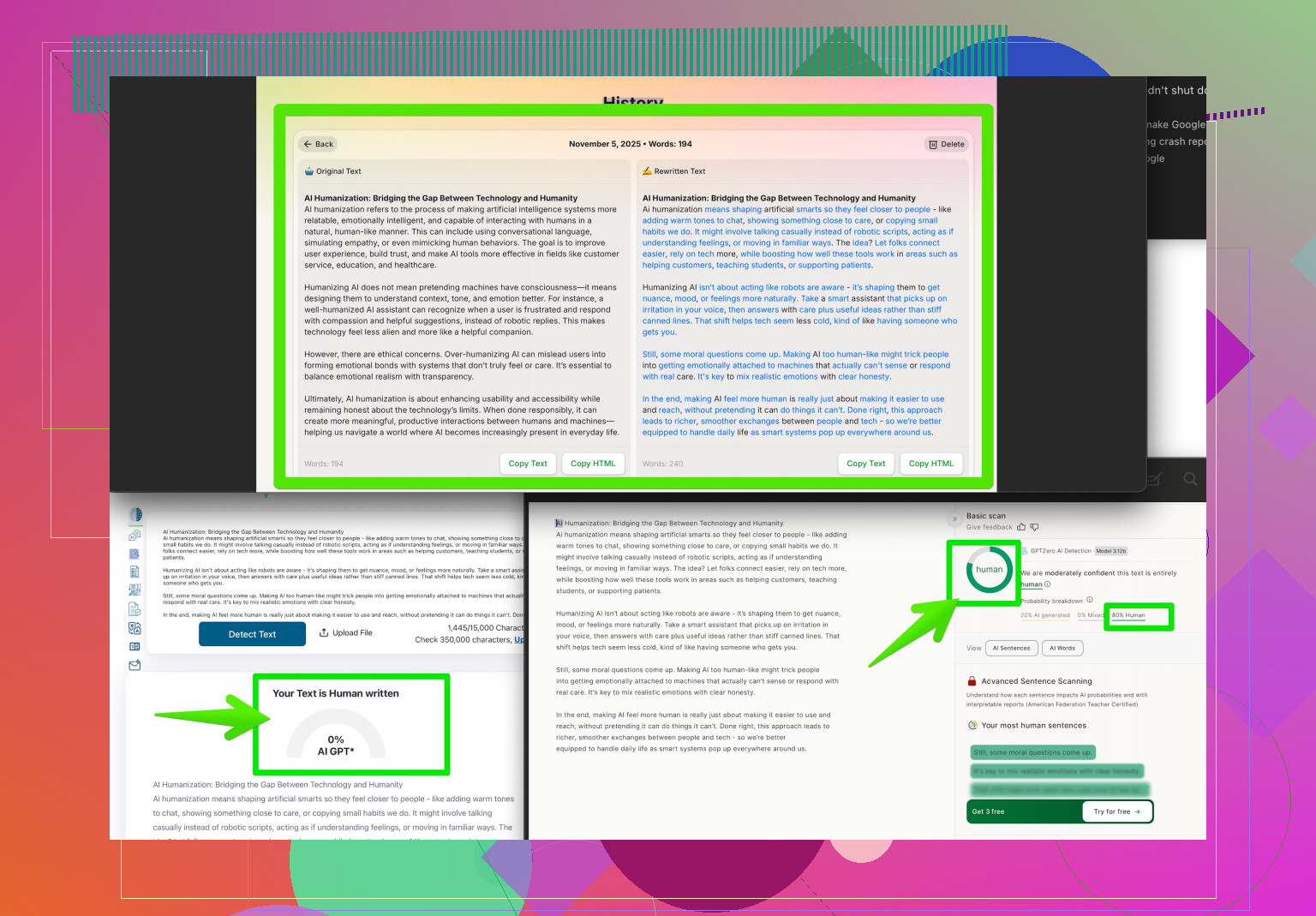

If you’re really worried about this, there are tools people use to “humanize” their writing and dodge those dumb detectors, like this one called Clever AI Humanizer. Supposedly, it makes your essay more “human-sounding,” which seems to trick Turnitin and other checkers. I’d check out how making your writing sound more authentic can help—especially if you write naturally like an encyclopedia (like I do, lol). Anyway, don’t stress out too much. Tech’s glitchy, but you’ve got backup if it messes up.

Not gonna lie, the Turnitin AI detector is kinda notorious for being overly sensitive. It flagged my original lit analysis last semester at like 30% “AI-generated” and trust me, I wrote that beast after four Red Bulls and a nervous breakdown, not with ChatGPT. Total facepalm moment. I saw @yozora’s take and yeah, it def lines up with what I’ve heard from friends and seen on student forums—Turnitin just isn’t all that reliable yet. But here’s the thing: I wouldn’t jump straight to “fixing” your essay with humanizer tools unless you legit wrote with AI or you KNOW your writing is super formulaic.

Instead, just document your process: outline, early drafts, any notes. Show those if you get flagged. Most profs, at least at my school, know the detectors are hit or miss and won’t chuck you under the bus if your work is original. Seriously, the best proof is your own work process. I’ve seen some posts about people using Clever AI Humanizer if they really panic about being misflagged, but for stuff you wrote honestly, most teachers care more about intent and your ability to back up your drafts than the weird AI score.

Pro tip: if your writing style is super formal or sounds like it’s straight from a textbook, that’s where you might wanna tweak your voice a bit—just so detectors don’t get “confused.” Maybe try reading your essay out loud and making it sound more like you. If you wanna see how other people handle this, check out what students are saying about making essays sound more authentic for AI detectors. Like, real tips from people who have dealt with the same nonsense.

Final point: Don’t let a silly tool ruin your confidence. Unless things go full Black Mirror and profs start trusting bots over students, stay calm and just be ready to explain your process. AI can’t beat the receipts.

Quick reality check: Turnitin’s AI detector is still a work-in-progress, and anyone who’s interacted with it knows the “false positives” are a thing, especially if your writing is very polished, formal, or just a bit different from the crowd. Both competitors mentioned experiences (and plenty of students share them)—even if you poured sweat into your draft at 2 a.m., you might still get pinged.

Here’s another angle: Instead of immediately tweaking your essay’s tone or using a “humanizer,” take a peek at Turnitin’s methodology. Their AI flag isn’t proof you cheated, it’s more like an automated nudge to reviewers: “hey, this looks a little too regular or textbooky—double-check, please.” Your best defense if flagged is ALWAYS keeping a record of your writing journey—brainstorming notes, draft stages, early saves with marked-up comments.

That said, sometimes “proving” your process isn’t feasible (lost notes, tight deadlines—life happens). This is where tools like Clever AI Humanizer come in, and I get why people suggest it. Pros: It can reshape prose to seem less robotic and formulaic, ideal if you know your writing naturally falls into that “overly academic” trap—even when it’s original. It works fast and can save you a headache if your prof is less forgiving. Cons: There’s a risk of over-smoothing—if your essay suddenly has a wild shift in tone, teachers might spot the change. Also, if you rely on it 100% for every assignment, you’re probably not correcting the root issue: writing that sounds “artificial,” even when it isn’t AI-generated.

Worth noting: Compared to similar tools, Clever AI Humanizer stands out for ease of use and subtlety, but some competitors let you adjust casualness/formality, which is useful if you need just a slight nudge and not a full rewrite.

In summary, don’t start editing solely for Turnitin’s quirks unless you see a consistent red flag. A humanizer can be a safety net, not a first step—proof of process is still the gold standard, and ultimately, context from your instructor trumps the algorithm every time.